This is part of a 3 part series!

Part one: General concepts: https://www.vicharkness.co.uk/2019/01/20/the-art-of-defeating-facial-detection-systems-part-one/

Part two: Adversarial examples: https://www.vicharkness.co.uk/2019/01/27/the-art-of-defeating-facial-detection-systems-part-two-adversarial-examples/

Part three: The art community’s efforts: https://www.vicharkness.co.uk/2019/02/01/the-art-of-defeating-facial-detection-systems-part-two-the-art-communitys-efforts/

Finally, we move on to the aim of the piece: the efforts of the art community. Here are some of, in my opinion, the more interesting techniques being used.

Side note: I’ll be commenting on how obvious these techniques are. Why is this important, I hear you ask? In London, a mass trial of facial detection/recognition systems is being undertaken. Don’t want to partake? You’ll get a talking to. Protest further? You might be risking a fine: https://www.independent.co.uk/news/uk/crime/facial-recognition-cameras-technology-london-trial-met-police-face-cover-man-fined-a8756936.html. With stories like this cropping up, people are likely to be looking for covert methods of avoiding facial detection/recognition systems.

CV Dazzle

Perhaps the best known artistic counter-detection method is CV Dazzle. The work of Adam Harvey, it makes use of the principles that I described in the first post of this series- that algorithms rely on light and dark regions in the face to perform detection. Harvey’s work makes use of dramatic makeup and hair styles to break up the symmetry of the face, and change these balances. He also appears to be a fan of obscuring the top of the nose, in the region between the eyes. Some examples can be seen here:

Sources: Adam Harvey (https://cvdazzle.com/)

The techniques seen here go against conventional makeup trends. Traditionally, makeup is used to emphasise key features; eyeliner to make eyes look bigger, lipstick to make lips look fuller. CV Dazzle aims to draw away from them. Symmetry, something else rather important in judging conventional beauty is avoided in the CV Dazzle looks.

So how well does it work? In some DIY trials, quite well! The camera system used struggled quite a bit to detect these faces, in stationary images. It would be quite interesting to attempt to replicate them in the real world (probably through the use of wigs), and see how they work from multiple angles.

With the success of these looks, they seem like a real viable option for defeating facial detection systems. They wouldn’t be suitable for your government ID, but they simply look quirky in the real world. Whereas you may be asked to remove a helmet or mask upon entering a shop, there would likely not be an issue with these styles. It is quite possible that they could protect you from some of the more futuristic envisioned uses of facial detection/recognition systems, such as ones for automatic demographic detection whilst waiting in a shop queue to better target the delivery of advertisements.

On the subject of makeup, there are numerous other trials in this area taking place. A simple Youtube search for “facial detection makeup” will find you many tutorials on how to create such styles, as well as people playing with the effects of makeup on their mobile phone’s face unlock systems.

Face Dazzler app

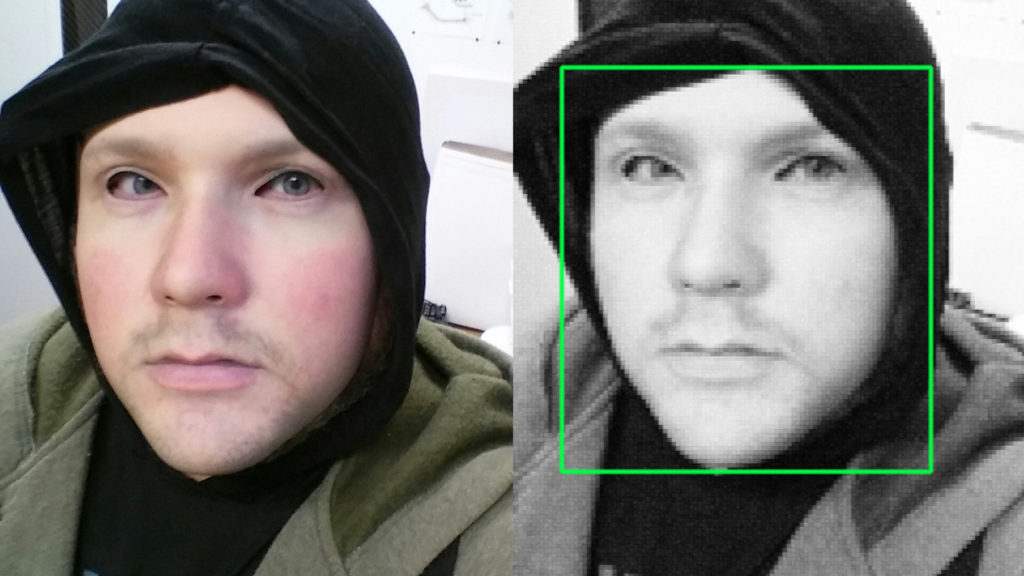

Face Dazzler is an app which is designed to try and prevent automated facial detection/recognition from being able to take place, through the distribution of disruptive shapes in an image. The app used to be available on the Google Play store, but has since been pulled for unknown reasons. When I played with it I found it to crash frequently and seemingly randomly, so perhaps this had something to do with it. Still, it remains available on third-party app stores.

The app works by adding black and white shapes over an image. The idea is that the picture is still recognisable to other humans, but not to automated systems. This means that you can still upload photos of yourself to Facebook for friends to see, without having to worry about the automatic identification which is carried out by the Facebook systems. Examples of the results can be seen here:

These obfuscations are indeed sufficient to cause classification issues in a facial detection/recognition system, but they’re also sufficient to be very annoying to look at. Large swathes of the face are obscured, making the contents hard to see. They would also assumedly flag you as a bit of a weirdo to your friends and family.

I would say that the concept of this app is quite good, but the execution is poor. Perhaps a version which generated adversarial examples based on input images would be a better alternative; this would be quite functional due to the direct processing of the images, but may be found to be too computationally complex for a mobile phone.

Privacy visor

Privacy Visors were a style of glasses which features a layer of transparent mesh over the top. The glasses would fool facial recognition systems which would normally not struggle with glasses by not just obscuring features, but also manipulating the way in which light is reflected towards the target camera.

Source: Tim Hornyak (https://www.pcworld.com/article/2969732/privacy/how-japans-privacy-visor-fools-facerecognition-cameras.html)

The white plastic sheeting is at an angle, especially over the nose area. This is designed to reflect overhead light in to the camera, dazzling it to obscure the face. These glasses were reportedly fundraising for full-scale development/sale. This however does not appear to have happened.

That said, the concept for the glasses is quite good. Although a bit oversized, they look like they could be a fashion gimmick. Perhaps in the future someone will revisit this concept, creating a more compact version which does make it to full-scale production.

Privacy Visor (again)

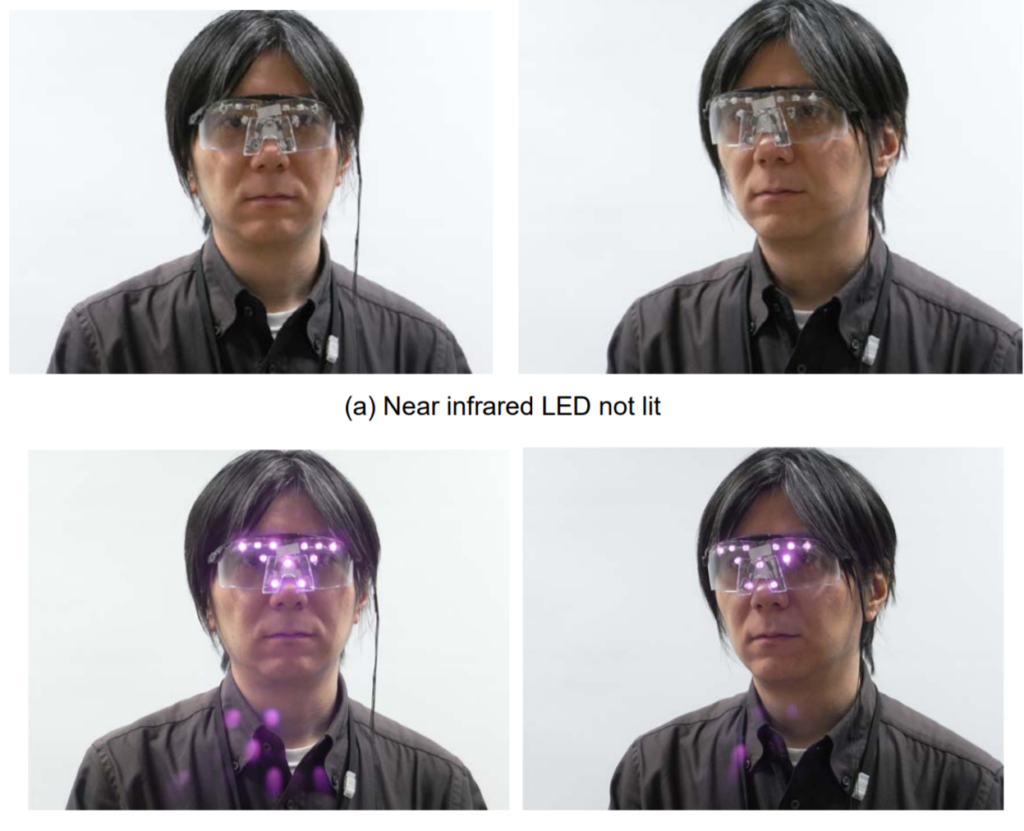

Confusingly, another developer has developed a style of facial detection defeating glasses and named them Privacy Visor. These ones feature near infrared LEDs around the edge, which dazzle cameras.

Source: Isao Echizen (http://www.nii.ac.jp/userimg/press_20121212e.pdf)

These glasses reportedly work quite well, although it would be hard to test this without having a pair of them to hand. The glasses themselves quite obviously look high-tech, which draws attention to the wearer. Perhaps the LEDs could be better embedded in to the frames, leading to a more subtle appearance.

Along the lines of this idea, I have also seen a concept piece consisting of glasses which cast lights on the wearer’s face. The aim of this was to change the balance of light/dark in the face through highlighting and shadows, causing facial detection systems to struggle. The system worked quite well but again had the issue that it was very attention-drawing.

Reflectacles

Yet another pair of glasses designed to disrupt facial detection systems, we have the Reflectacles. These glasses, as you may have guessed, are also designed to reflect light. They appear to be targeted towards people that want to avoid being picked up by cameras in low light conditions, but can reflect both visible and infrared light.

Source: http://www.reflectacles.com

The dazzling effect of these glasses seems to be quite effective in night-time, and when flash photography is in use. The website advises that if you are using a mobile phone which unlocks via facial recognition, you will have to remove these glasses for it to work.

The glasses are available in a range of colours, with a guide provided for which colours are more and less effective. The frames themselves don’t overtly draw attention, and don’t look like they’re trying to do anything fancy. Because of this, they seem to be quite a viable choice for avoiding camera systems.

Another crowd-funded project, these seem to have actually come to fruition- they are available to purchase on the Reflectacles website.

Stealth Wear

Another project from Adam Harvey, creator of CV Dazzle: Stealth Wear. Stealth Wear is a range of thermally-opaque clothing pieces which aim to block overhead thermal surveillance, such as may be used by UAVs/drones.

Source: Adam Harvey (https://ahprojects.com/stealth-wear/)

The main point that jumps out at me is that these people still have legs which are uncovered, and therefore aren’t too thermally invisible. I have also heard that the silver-plated synthetic fabric which is used in the manufacturing of thermally-opaque garments is, as you would expect, very insulating. Wearing these garments for any real length of time would probably be very uncomfortable. The price is also a big issue, with the burqa design being priced at £1,500.

Items of clothing such as these might also be suitable for fooling some forms of facial detection/recognition systems due to their reflectivity. They look quite high tech, but again, probably would be passable as quirky fashion.

Flashback

In a similar vein to the Stealth Wear range is the Flashback Photobomber Hoody.

This hyper-reflective hoody is designed to prevent flash photography, such as may be used by annoying Paparazzi. The above hoody retails at ~$180, making it a much cheaper alternative. There also appear to be third party rip-off versions of the concept floating around for purchase online.

Will this jacket stop a regular facial detection/recognition system? As with Stealth Wear, maybe. Maybe not. It depends on the angle of the lighting. It is possible that it may work well with low-light surveillance systems, as the Reflectacles are designed to do.

This jacket has the added bonus that it looks like a standard waterproof jacket. Unless you recognised the subtle logo, you most likely would not suspect that anything was amiss. A scarf made of the same material is also said to be available as part of the range, as well as other alternatives such as the ISHU scarf, interweaving the reflective material with standard to create a visually appealing effect.

Hyperface

Yet another Adam Harvey project, we have Hyperface. Hyperface is still in the prototype faze, but promising. It is a novel pattern which appears as a face to facial detection systems, thus lowering their confidence in the wearer’s face. The patterns are designed to be algorithm-specific, although there a design targeted towards one particular will likely have some success with other systems.

Source: Adam Harvey / ahprojects.com

In the future, this material could be used to produce various styles of fashion- tshirts, hoodys, perhaps even onesies or hats.

The pseudo-faces seen in the above prototype design make it quite distinct, but not to the uneducated observer. Again, this would look like quirky fashion unless you knew about the concept. If you were working within the domain, it would probably be quite easy to pick out in a crowd.

URME Surveillance

URME Surveillance sells 3D printed masks of artist Leo Selvaggio’s face for the low low price of $200.

Source: URME Surveillance (http://www.urmesurveillance.com/)

This will not stop a facial detection system, but it will stop a facial recognition system in a way. To a casual observer you are showing your face, but the system identify you as this guy, not yourself. There are of course some shortcomings for the mask, mainly that if you look too hard it’s obviously a mask, and that of course you cannot speak whilst wearing it. Still, it’s an interesting concept in my opinion.

It is also possible to download a copy of the face for home printing/DIY mask making, and pre-made versions are available for $1 on Amazon.

Source: URME Surveillance (http://www.urmesurveillance.com/)

Honorable mention: Data-masks

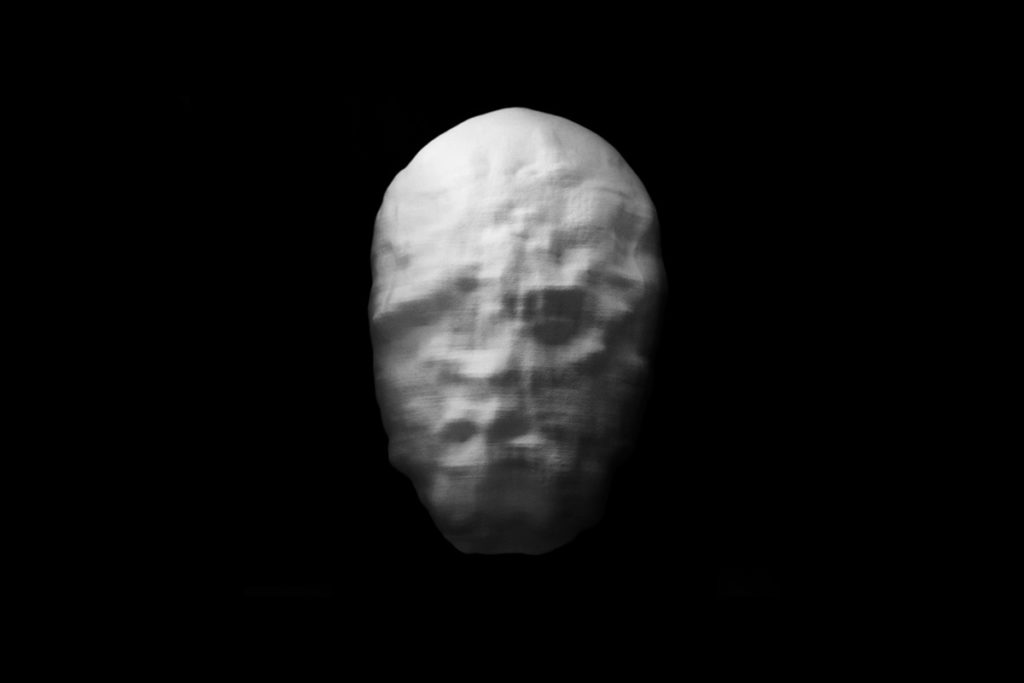

Data-masks is an art project which, although designed to fool facial detection systems, is not for wearing. Instead it is an art installation of pseudo-faces.

Source: Sterling Crispin (http://www.sterlingcrispin.com/data-masks.html)

These masks have been created through a combination of facial detection and genetic algorithms. Each mask started life as a 3D model of an oval. A genetic algorithm was then used to gradually permutate the shape of the oval, with the target function being fed with the detection confidence output of a facial detection system. This caused the ovals to gradually evolve in appearance until in the eyes of the facial detection system, they were faces.

Source: Sterling Crispin (http://www.sterlingcrispin.com/data-masks.html)

Reportedly, Facebook’s automatic facial detection/recognition system detects/classifies faces in them. In my own tests, I found that a test camera system would frequently (but not always) detect faces in them.

As time goes on, I’m sure more interesting techniques will appear. Hopefully in a few years I’ll be able to revisit this subject and write about all the cool new things available!

[…] The art of defeating facial detection systems: Part three: The art community’s efforts […]

[…] The art of defeating facial detection systems: Part three: The art community’s efforts […]

[…] I’ve wrote a trio of blog posts on facial detection/recognition systems and how to defeat them, which can be seen here: Part one: General concepts: https://www.vicharkness.co.uk/2019/01/20/the-art-of-defeating-facial-detection-systems-part-one/ Part two: Adversarial examples: https://www.vicharkness.co.uk/2019/01/27/the-art-of-defeating-facial-detection-systems-part-two-adversarial-examples/ Part three: The art community’s efforts to defeat these systems: https://www.vicharkness.co.uk/2019/02/01/the-art-of-defeating-facial-detection-systems-part-two-the-art-… […]

Very interesting:). By the time we’re all wearing data masks, thermal burkhas and walking funny with a hunch to defeat gait analysis and body shape recognition the human race will die out because we’ll all be unable to get dates;)